...corrected to this:

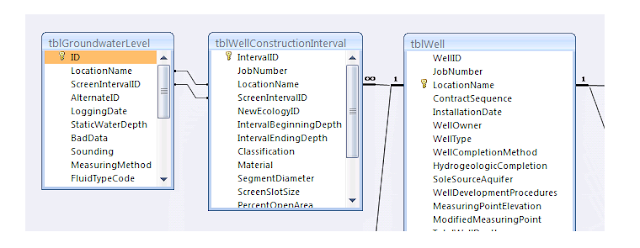

Of course this made more trouble, adding and then correlating water levels with the new well screen identifiers (I just used "001" to replace all the Null values in ScreenIntervalID). The next issue was a bit embarrassing -- my nice, clean data in tblGroundwaterLevel ... not so clean. Found a number of violations, nulls, and weird missing data in this table. Not surprising when accumulating data from a dozen different sources for one analytical tool, I have to constantly remind myself.

Enter the index. This is a way of enforcing rules between records of data. Check out UniqueKey; it's telling the tblGroundwaterLevel that records cannot repeat these four fields:

- LocationName

- ScreenIntervalID

- LoggingDate

- MeasuringMethod

Additionally, including "MeasuringMethod" guards against a key violation if there's a manual measurement at exactly the same moment as a transducer measurement is made, providing the data manager specifies different methods for the different data.

Keys guard against:

- duplicate records

- ex: importing from two different Excel files with overlap; or

- importing the same transducer file more than once.

- incomplete records

- blank LoggingDates (this was a problem bringing in data from a northwest-based regulator)

- blank levels

- What to do when the DryWellFlag is true -- include a sounding for StaticWaterDepth? Fake a value?

- We're guarding against duplicate WellID (LocationName/ScreenIntervalID) entries for the same date -- another issue I've seen importing data from a variety of sources is conflicting info for the same date/well -- so you want to catch imports like this:

(Wrinkle: while I could have -- probably should have -- made this the PrimaryKey (ensuring no nulls or duplicates in the future, and probably better compatibility with Access 2010), calling it a UniqueKey was easier because I could ignore nulls for a moment; save the index; go fix duplicates and Null ScreenIntervalIDs; and come back to enforce the index.)

Now to implement this in our template DB.

No comments:

Post a Comment